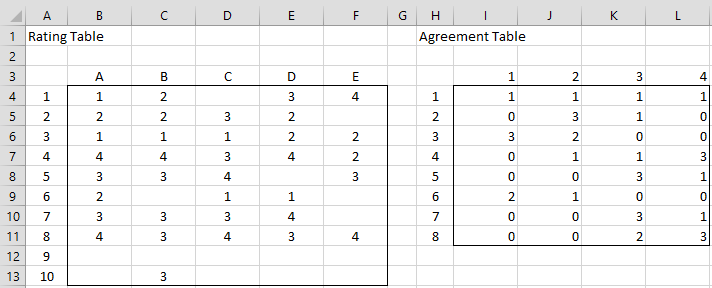

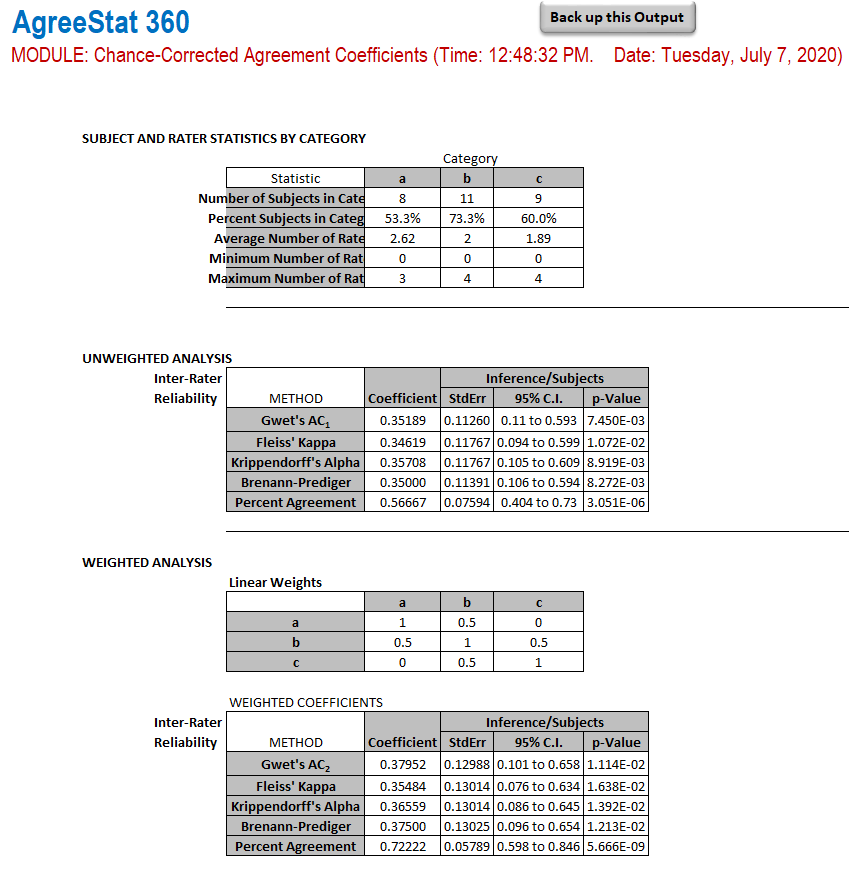

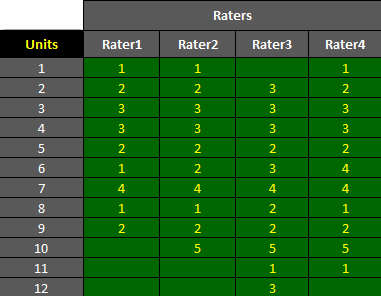

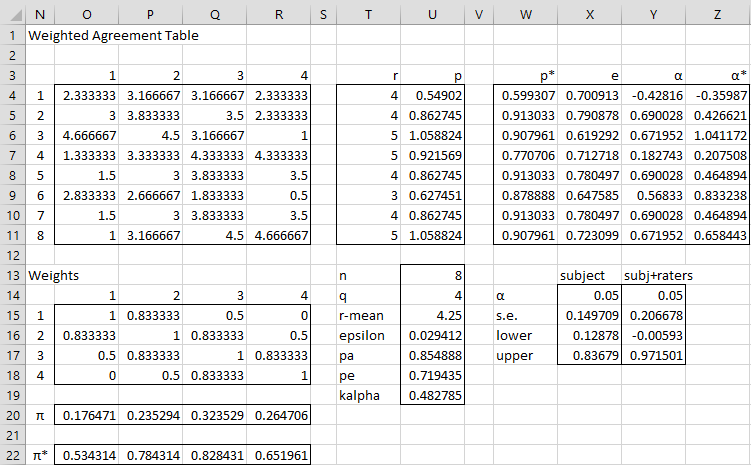

AgreeStat/360: computing weighted agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) with ratings in the form of a distribution of raters by subject and category

Measuring inter-rater reliability for nominal data – which coefficients and confidence intervals are appropriate? | BMC Medical Research Methodology | Full Text

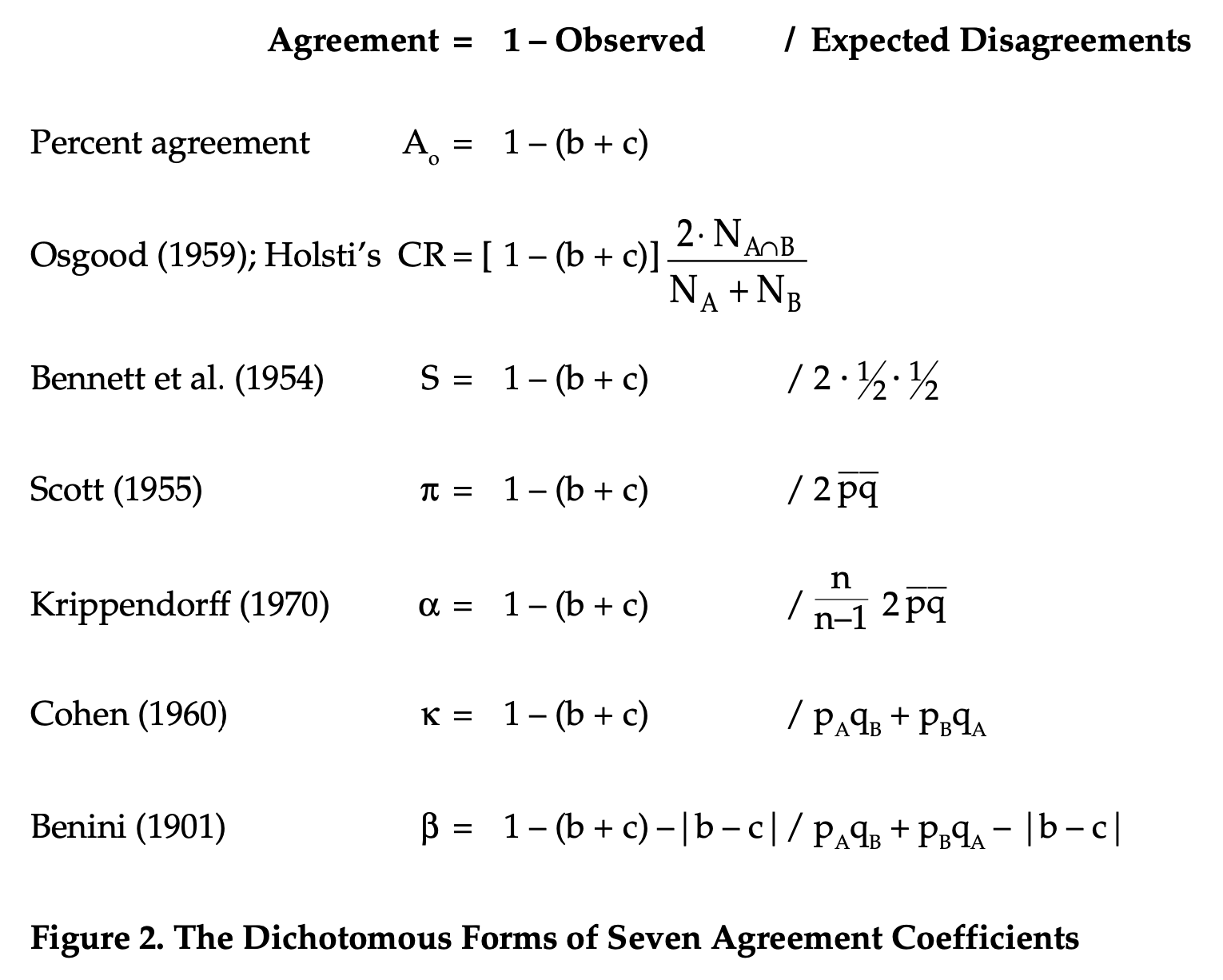

Worked Examples for Nominal Intercoder Reliability by Deen G. Freelon (deen@dfreelon.org) October 30, 2009 http://www.dfreelon.c

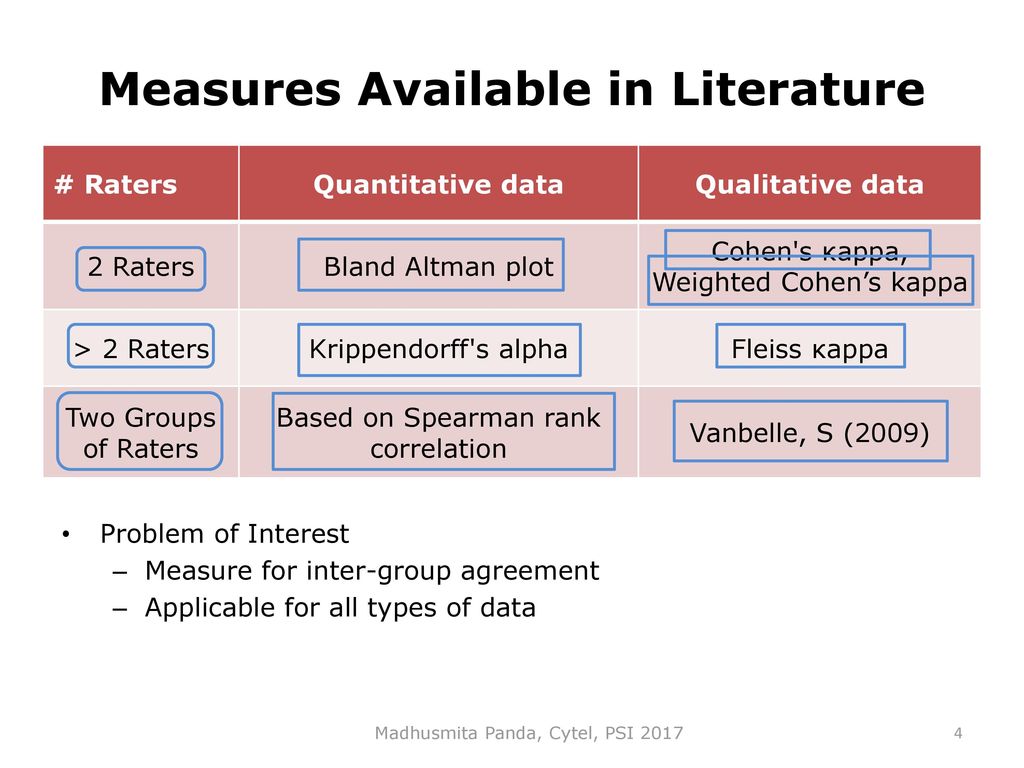

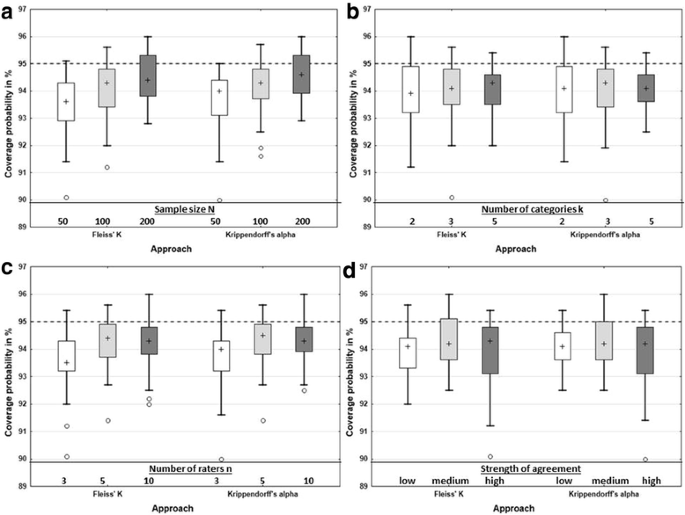

Measuring inter-rater reliability for nominal data - which coefficients and confidence intervals are appropriate?

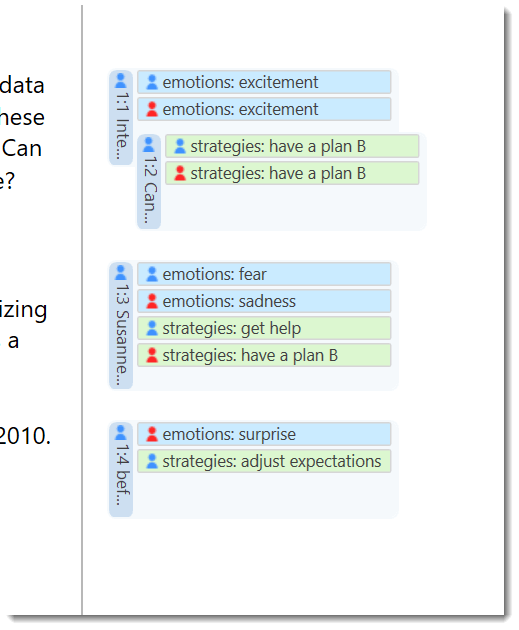

Weighted Krippendorff's alpha is a more reliable metrics for multi- coders ordinal annotations: experimental studies on emot

Using JMP and R integration to Assess Inter-rater Reliability in Diagnosing Penetrating Abdominal Injuries from MDCT Radiologica

Inter-rater agreement measured using Cohen's Kappa and Krippendorff's... | Download Scientific Diagram

Kappa and Krippendorff's Alpha Statistical Values Regarding the Scores... | Download Scientific Diagram

Inter-rater agreement measured using Cohen's Kappa and Krippendorff's... | Download Scientific Diagram

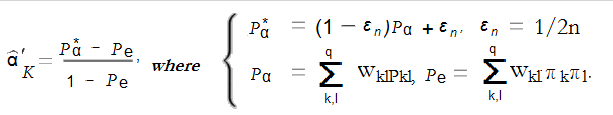

![PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/90b246032379c922503fa8cdcfce56435a142148/5-Table2-1.png)

![PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/90b246032379c922503fa8cdcfce56435a142148/12-Table4-1.png)